The Ontology-Powered Operating System

for the Modern Enterprise.

Your business model maintains itself, answers questions with cited evidence, and projects itself for the question you are asking.

Coherence encodes the data, logic, and governance of your enterprise into one navigable model. Every answer cited. Every link auditable.

Built for banking, pharma, automotive, insurance, defence, and SaaS.

Model the components

of Human + AI decisions.

Unify vast and fragmented sources into coherent objects, properties, and links.

Encode the data.

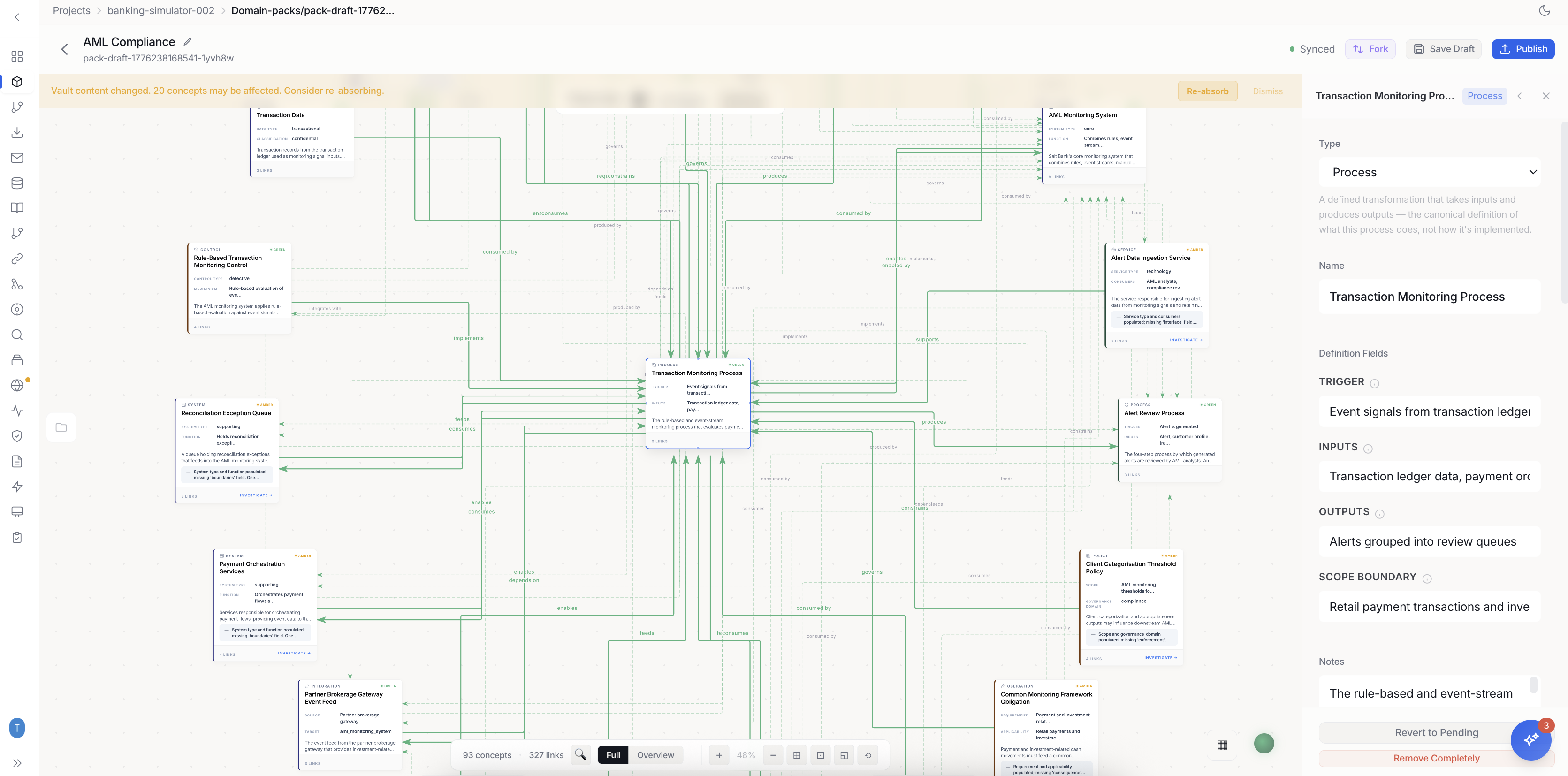

Unify fragmented sources into coherent objects, properties, and links. Domains define every business concept that matters.

Capture the logic.

Execute Python and TypeScript logic directly against the ontology. 90-day deployment cycles instead of 3-year integrations.

Govern the agents.

Ground every internal and external AI tool in your governed ontology. Plug Copilot, Cursor, and Devin into the ontology via MCP. Every output grounded.

The representation gap

is the root cause.

Why does the representation gap exist?

Legacy enterprise tools are read-only overlays. Data catalogs, observability suites, and BI dashboards strictly read state. The model they describe decays the moment it is created. Coherence keeps the model alive by connecting every business concept to the systems that implement it.

Every concept is linked to the APIs, schemas, code functions, and compliance rules that make it real. When any of those change, the model updates. When the model changes, the downstream systems know.

The Ontology System encodes the semantic and structural elements of your business into a single navigable model.

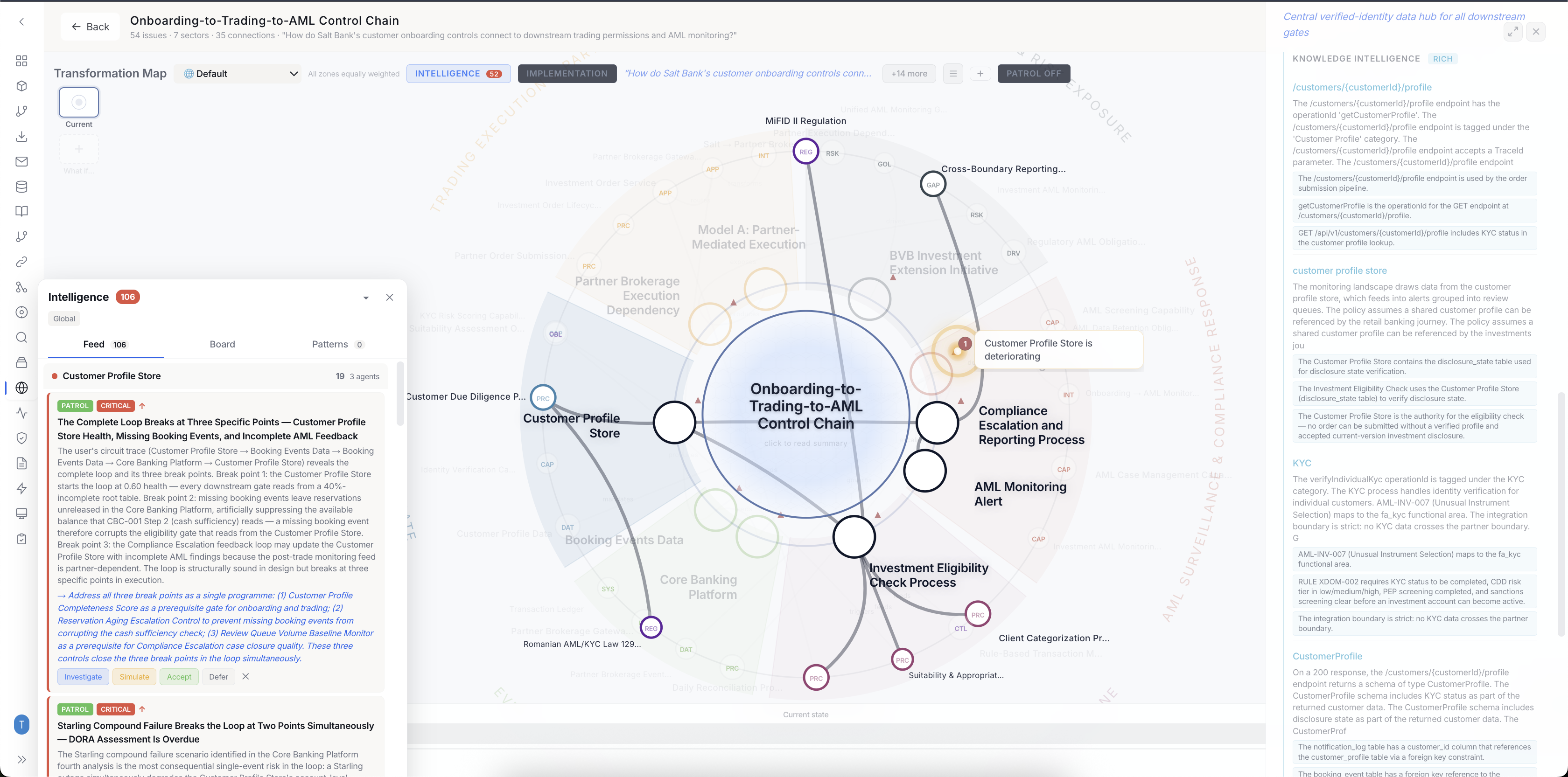

Weave your data and analytics directly into the daily decision-making happening across your core business and operational teams.

Your domain experts curate the meaning. Specialist agents maintain the connections. The platform enforces governance across every layer.

Integrate decision-making in a common logic layer.

Drive meaningful action as conditions evolve.

Treat the Ontology as a backend mapping reality.

Humans define meaning and govern the model.

Specialist agents maintain, enrich, and monitor.

Model the actions of the enterprise. Represent real-world actions as first-class primitives and define how they can be performed.

The industry is converging on this.

We are already here.

These are not endorsements. These are the voices shaping enterprise AI, describing the architecture we already built.

“The ontology is the secret weapon. Nothing else comes close.”

“In the future, every company will have two factories: one for what they build and another for AI.”

“Scaling is hindered not by the models themselves, but by fragmented data, poor metadata practices, and a lack of unified data platforms.”

“Preventing AI hallucinations requires a multi-faceted approach that combines RAG with rigorous data curation and continuous model improvement.”

“We would like to simulate the entire product completely in full fidelity completely digitally. Essentially what we call Reality Meshes.”

“Humans give too much importance to language as the primary substrate of intelligence. Intelligence requires powerful world models.”

Treat the Ontology

as a backend.

Build rich AI-enabled applications and services.

Python and TypeScript SDKs for writing logic against the ontology. CLI for pipelines. MCP for every major AI coding agent.

Create tools for any human or any agent. Teams from across the enterprise can compose reusable tools that encode their domain expertise directly into the Ontology.

Orchestrate complex

operations at scale.

Millions of reads, millions of writes. One unified reality. The ontology stays consistent while every team writes to it simultaneously, and every decision made in the ontology propagates to the operational systems that execute it.

The first enterprise

you can walk through.

Project any topic from your ontology into a self-organizing 3D world.

Rooted in ground truth. Connected to your live infrastructure.

Populated by AI agents that traverse and debate under human governance.

Early preview · Production 3D world assets in active development

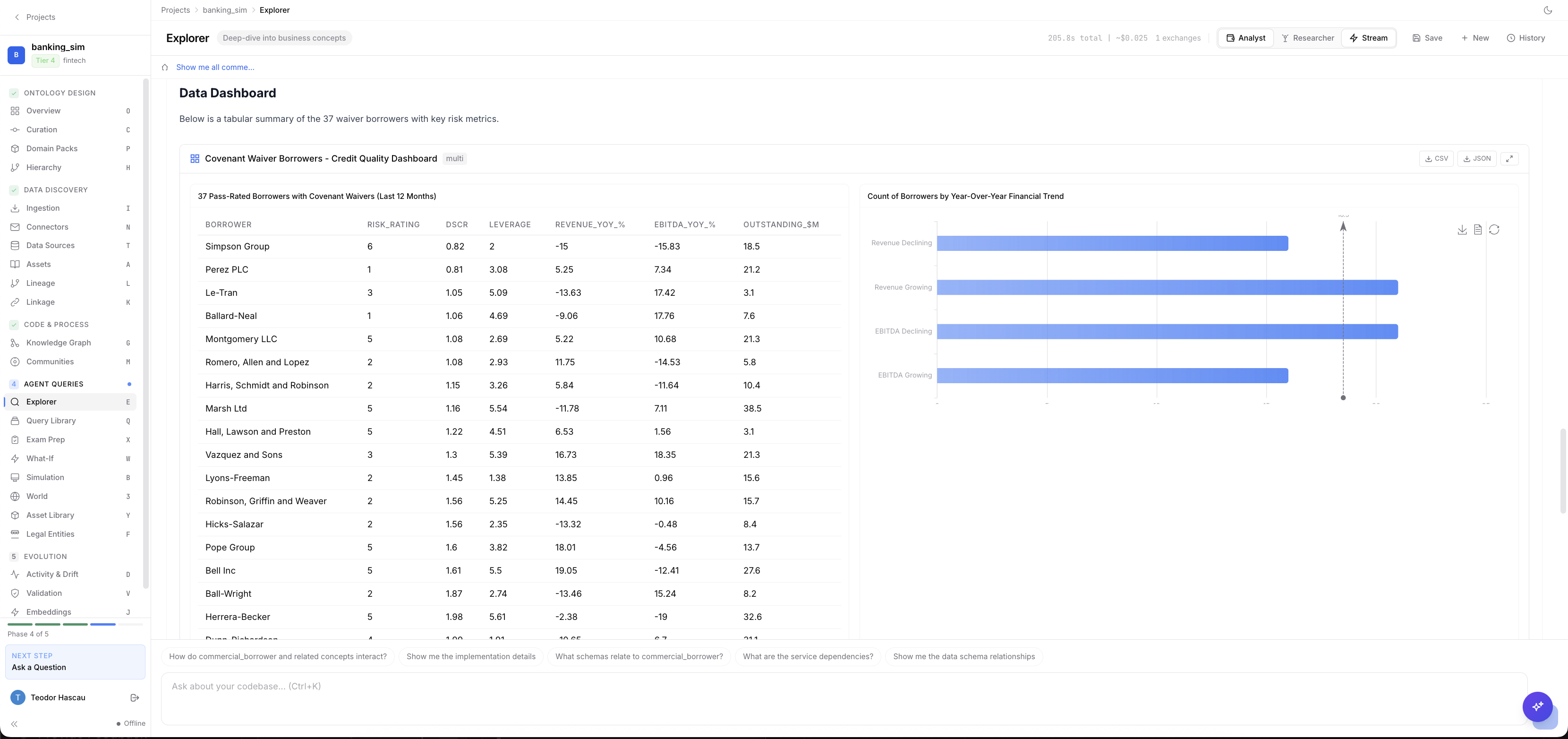

Ask in plain language. Counsel connects to your live databases, queries them through your business ontology, and generates real-time dashboards with cited evidence.

See it for your world.

Explore how the Ontology works for your role, your industry, and your specific problem.